LLM SEO is optimising content for AI-powered search engines that use large language models to generate answers, not just rank links. If your content isn’t structured for how these systems work, you’re invisible to a growing share of searches.

What does LLM SEO actually mean?

Large language models are what power AI search tools like ChatGPT, Perplexity and Google’s AI Overviews (and also weird images your friends are sending you via Whatsapp). Instead of just crawling pages and returning a list of links, these systems read and summarise content to give you a direct answer. Sometimes they cite sources, and then the issue for SEOs is that often they don’t.

Traditional SEO is about getting a page ranked in search engine results pages. LLM SEO is about getting your content extracted, understood and then mentioned inside an AI-generated response. The signals are different. The structure matters more. And keyword density matters much less than it used to.

A related term you’ll see used: GEO SEO, which stands for Generative Engine Optimisation (this industry is all about the acronyms). It’s used interchangeably with LLM SEO, though GEO tends to focus on getting cited by generative AI engines rather than the broader optimisation work involved. Both mean the same thing in practice.

How has search behaviour changed because of LLMs?

Three things are worth understanding here, because they change what “good content” looks like.

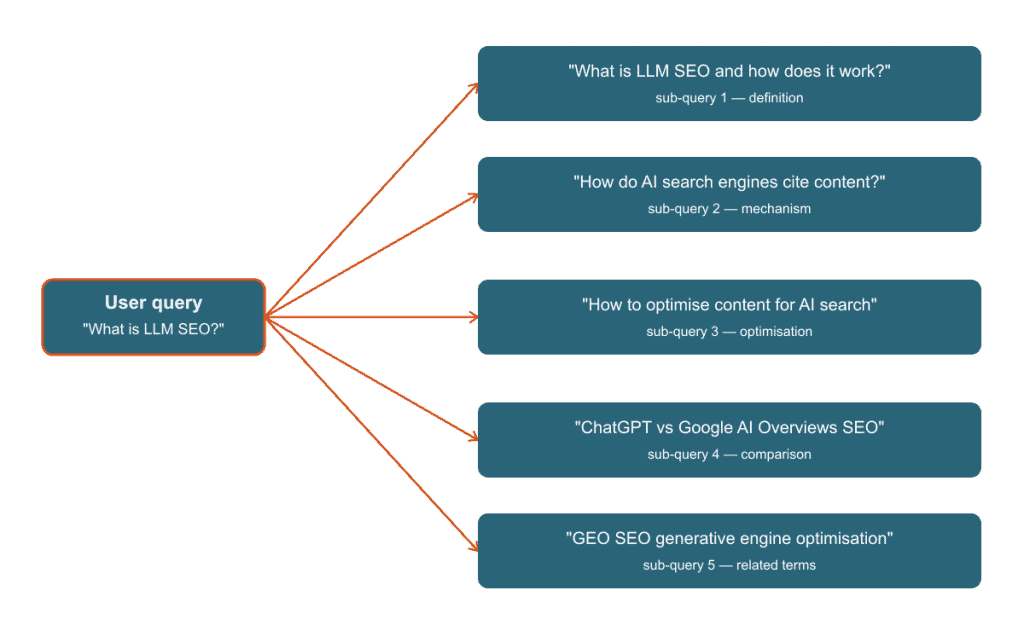

- Query Fan-Out

- When someone types a prompt into an AI search engine, the model doesn’t run just one search. It generates multiple sub-queries to build its answer.

- Google’s Head of Search Elizabeth Reid confirmed this at Google I/O 2025, describing how AI Mode “breaks down your question into subtopics and issues a multitude of queries simultaneously.”

- For simple queries that’s several sub-queries; complex questions can trigger many more. That means your content might get pulled in to answer a question you didn’t directly target, if it’s semantically relevant.

- Recency bias

- AI search engines prefer fresher content. An Ahrefs study analysing 17 million citations found that AI-cited pages are on average 25.7% fresher than the top traditional Google results for the same query – with ChatGPT showing the strongest bias, citing pages that were hundreds of days newer than typical organic results.

- Updating existing content regularly has always been good housekeeping, but now it can affect whether AI systems pick it up.

- Semantic chunking

- LLMs don’t read pages the way humans do. They process meaning in chunks, typically under 500 tokens (roughly 375 words).

- Much like the youth of today, long and dense paragraphs don’t process well. Short, self-contained sections do. If a paragraph can’t stand alone as a standalone idea, it’s harder for an AI to extract and cite accurately.

Google’s ranking signals don’t really work like that because keyword matching only gets you so far. AI engines are built to provide clear answers to specific questions, and content that’s easy to read in chunks wins.

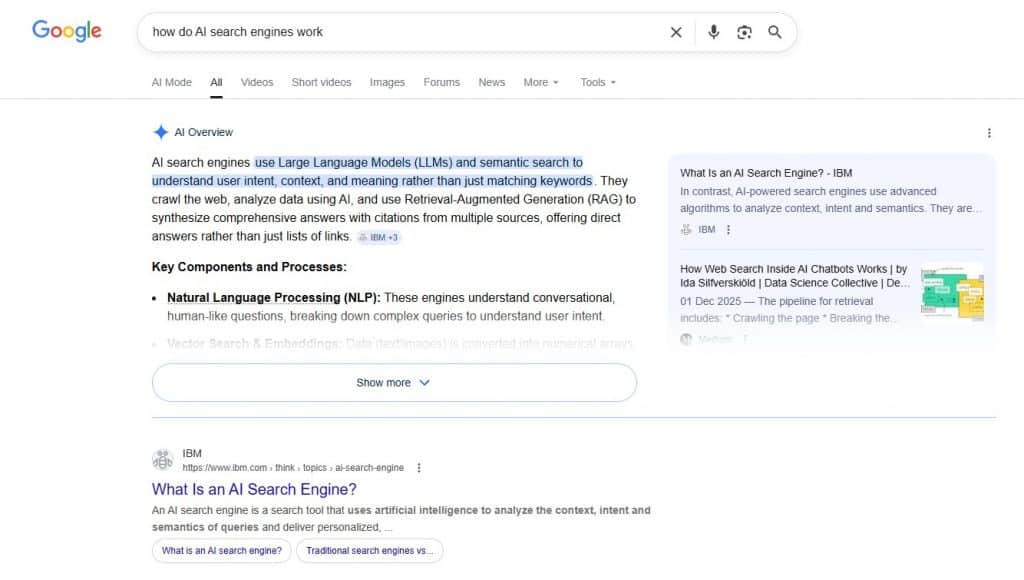

What does Google’s AI Overview mean for SEO?

AIO SEO, optimising for Google’s AI Overviews, is its own thing within the broader LLM SEO picture. AI Overviews appear at the top of Google results for a growing range of queries, generated from indexed content across the web.

What gets cited in AI Overviews tends to share some traits: direct answers near the top of the page, structured content with clear headers and content with enough depth to be worth citing.

Position one doesn’t guarantee a citation. A page sitting at position four with a cleaner structure will often win.

Zero-click is genuinely eating into organic traffic. If Google’s AI Overview answers the question fully, a meaningful portion of users won’t click through to the source. Worth factoring into how you think about content, especially for informational queries. The practical response isn’t to write worse content to force a click, it’s to optimise for brand visibility in AI citations and build content worth coming back to. I’m playing the long game here.

How do you optimise content for LLM search results?

- Lead with the answer

- Get your direct answer into the first 160 characters of any page or section. AI systems extract the clearest, most direct answer available. If you hide it, it won’t get pulled.

- Use question-style headers

- H2s and H3s phrased as questions (“How do you optimise content for LLM search results?”) match the sub-queries AI engines generate. They also help the model understand what each section is answering.

- Keep paragraphs atomic

- Try to target under 80 words per paragraph. Each paragraph should contain one complete idea. If you need two ideas, use two paragraphs.

- Use HTML tables for comparisons and data

- Structured data in tables is easy for LLMs to extract and represent accurately. Comparisons written as prose are much harder to parse.

- Write for topic clusters, not keyword density

- A page that covers a subject thoroughly and links to related content on the same site performs better in AI search than a page keyword-stuffed around a single term.

- Update content regularly

- Because AI search leans towards newer content, your stale content can get deprioritised. Set a reminder to revisit and update content when something meaningful changes.

- Tracking which pages are gaining or losing visibility over time makes this easier. Here’s how I set up SEO reporting without a paid tool.

- Add author schema

- AI systems weight authoritativeness. Structured data that identifies an author and links to supporting information (author page, bylines elsewhere) is a good signal.

- Write comparative and consensus content

- Well-structured comparative content (like ‘X vs Y’ articles or best-of roundups) consistently over-performs in AI citations. AirOps’ 2026 State of AI Search report found that comparison pages, listicles, and review content account for the majority of third-party AI citations. Opinion pieces and shallow content get left behind.

| Element | Old Approach | New Approach | Why It Matters |

|---|---|---|---|

| Title | Keyword-led | Question or direct answer | Matches sub-query generation |

| Content length | Longer = better | Appropriate depth, atomic sections | AI parses in chunks under 500 tokens |

| Keywords | Density-focused | Semantic coverage of topic | LLMs match meaning, not strings |

| Structure | Flowing prose | Short paragraphs, H2s as questions | Easier extraction and citation |

| Headers | Descriptive | Question-format where possible | Aligns with how AI generates sub-queries |

| Recency | Set and forget | Regular updates | AI search prefers fresher content |

Is traditional SEO dead because of LLMs?

Not at all. Keyword research still tells you what people want to know. Backlinks still signal authority to both Google and the AI systems trained on web data. Technical SEO (crawlability, speed, structured data) still determines whether your content gets indexed at all.

What changes is how you actually do it. A page optimised only for keyword density and getting backlinks, with dense paragraphs and a messy structure, will underperform in AI search even if it ranks well traditionally. Most of what makes traditional SEO good still applies, you just can’t get away with the shortcuts anymore.

ChatGPT SEO and SGE SEO are terms you’ll see used for platform-specific versions of this. ChatGPT SEO refers to getting cited in ChatGPT’s search-enabled responses; SGE SEO (Search Generative Experience) was Google’s earlier framing before AI Overviews became the standard term. Different names, same brief: clear structure, direct answers, and actually knowing your subject.

SEOs who’ve been doing it properly for years are probably fine. Probably. Everyone else has some catching up to do.

What am I doing differently on de-bri.com because of this?

This post is an experiment. The structure follows the brief I set out for this site: direct answers up front, question-format H2s, short paragraphs, a comparison table. I’m testing whether content deliberately structured for AI search performs differently in terms of AI visibility alongside traditional rankings.

The site is new, which means I’m starting from zero traffic data. That’s actually useful because I can track from the beginning how content structured this way performs compared to less intentionally structured posts, instead of trying to figure out what was already working.

I’ll write up what I find in a tracking post once there’s enough data to say anything meaningful. For now, the first post explains why I built this site and the second covers how I built it with Claude.

What I’m watching most closely right now: whether recency and structure signals hold up as AI search matures, or whether the systems get better at extracting meaning from less optimised content. Right now, structure still clearly matters. I expect that to change as models improve but in 2026, it’s worth building for how these systems actually work today.